Stay Compliant with OSA

What Online Safety Act (OSA) Regulates in Video Content

Online Safety Act compliance for OTT platforms helps assess, restrict, and moderate harmful content based on child safety and platform responsibility

| Content Category | What It Includes | OSA Rule | When It’s Risky | Why It Matters |

|---|---|---|---|---|

| Child Sexual Abuse Material | Sexual content involving minors | ❌ Prohibited | Always | Legal penalties |

| Sexual Content & Nudity | Nudity, explicit sex | ⚠️ Restricted | No age checks | Child safety |

| Violence & Harmful Content | Gore, abuse, harmful acts | ⚠️ Mitigation required | High severity | Viewer safety |

| Self-Harm & Suicide | Self-harm, suicide scenes | ⚠️ High-risk | Child exposure | Mental health |

| Hate Speech & Illegal Content | Hate, extremism, illegal acts | ❌ Remove quickly | On detection | Compliance risk |

| Alcohol, Drugs & Gambling | Substance use, betting | ⚠️ Restricted | Child access | Audience protection |

Online Safety Act Compliance Gets Complex at the Content Level

Online Safety Act compliance for OTT platforms makes it harder to consistently detect harmful content, protect minors, and maintain platform responsibility at scale

How Do You Prevent Harmful Content Before Publishing?

How Do You Prevent Harmful Content Before Publishing?Violence, self-harm, hate speech, and explicit content often appear without warning. Without proactive content moderation for streaming platforms, harmful material can reach viewers before action is taken

How Do You Protect Children from Unsafe Content?

How Do You Protect Children from Unsafe Content?Adult content, gambling, and harmful material must be restricted from minors. Without age-gating compliance for streaming platforms and child safety compliance for streaming platforms, platforms risk exposing children to unsafe videos

How Do You Prove Platform Responsibility?

How Do You Prove Platform Responsibility?Moderation is not just about removal, it requires evidence. Without content moderation audit logs, moderation workflow compliance, and publish-time moderation for OTT platforms, proving compliance becomes difficult

Manual moderation doesn’t scale. This does.

Apply Online Safety Act compliance for OTT platforms with proactive content moderation and automated checks. Detect harmful content, self-harm, hate speech, explicit content, and child safety risks early, without relying on manual review.

Strengthen child safety compliance for streaming platforms by controlling what gets published, restricted, or removed. With publish-time moderation for OTT platforms, moderation audit logs, and platform responsibility compliance software, your platform stays compliant as content scales.

Detect, Fix, and Control Any Content Compliance in One System

Identify compliance issues, configure triggers, and apply actions like blur, mute, or disclaimers supported by TrueComply.

| Category | Trigger | Action |

|---|---|---|

| Substance Use | Smoking & tobacco, alcohol, drugs (cigarettes, vaping, drinking, pills, syringes) | Detect, add label, blur segment |

| Nudity & Sexual Content | Partial nudity, full nudity, sexual or aroused nudity, child nudity/suggestiveness | Blur segment, remove clip, detect |

| Violence & Harm | Mild, moderate, strong violence, gore, weapons, accidents, disasters | Detect, blur segment, remove clip |

| Language & Expression | Profanity, offensive language, hate speech, obscene gestures | Beep dialogue, mute dialogue, detect |

| Sensitive Scenarios | Self-harm, suicide, animal cruelty | Detect, blur segment |

| Contextual & Platform Signals | Gambling, sexual orientation/gender identity, custom triggers | Detect, add label, apply custom rules |

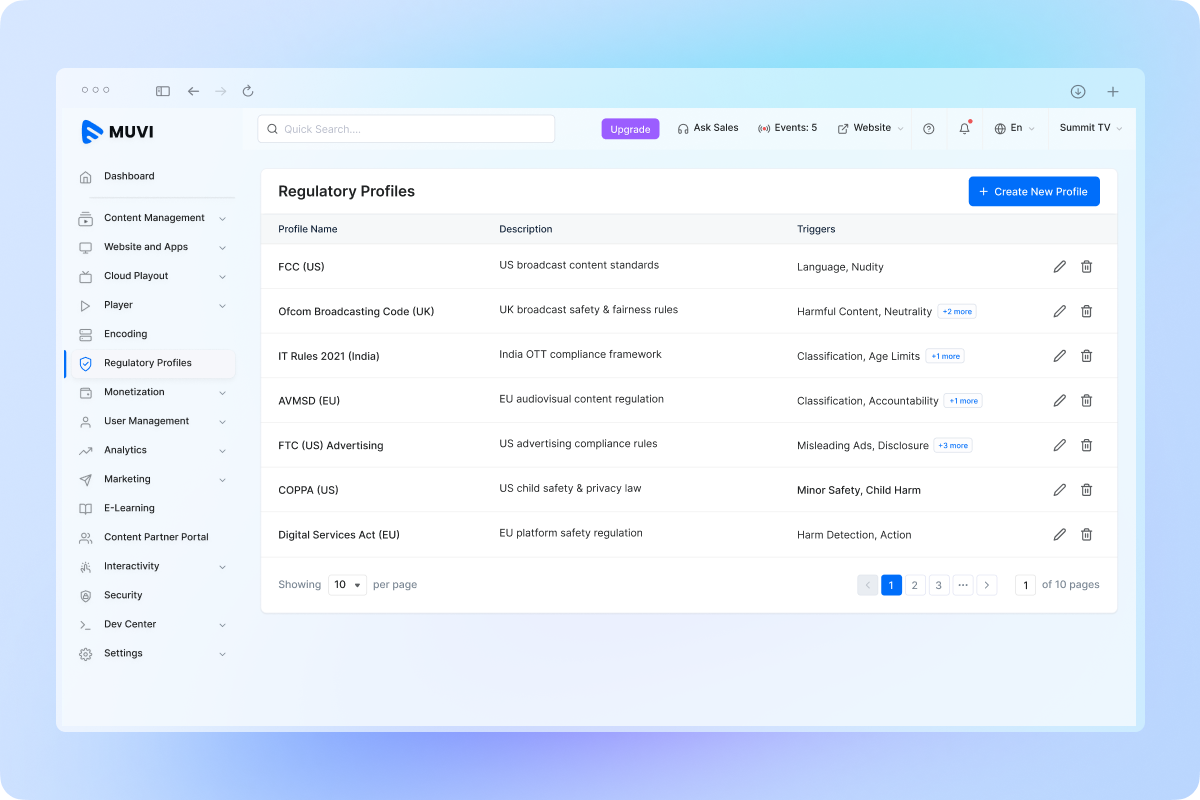

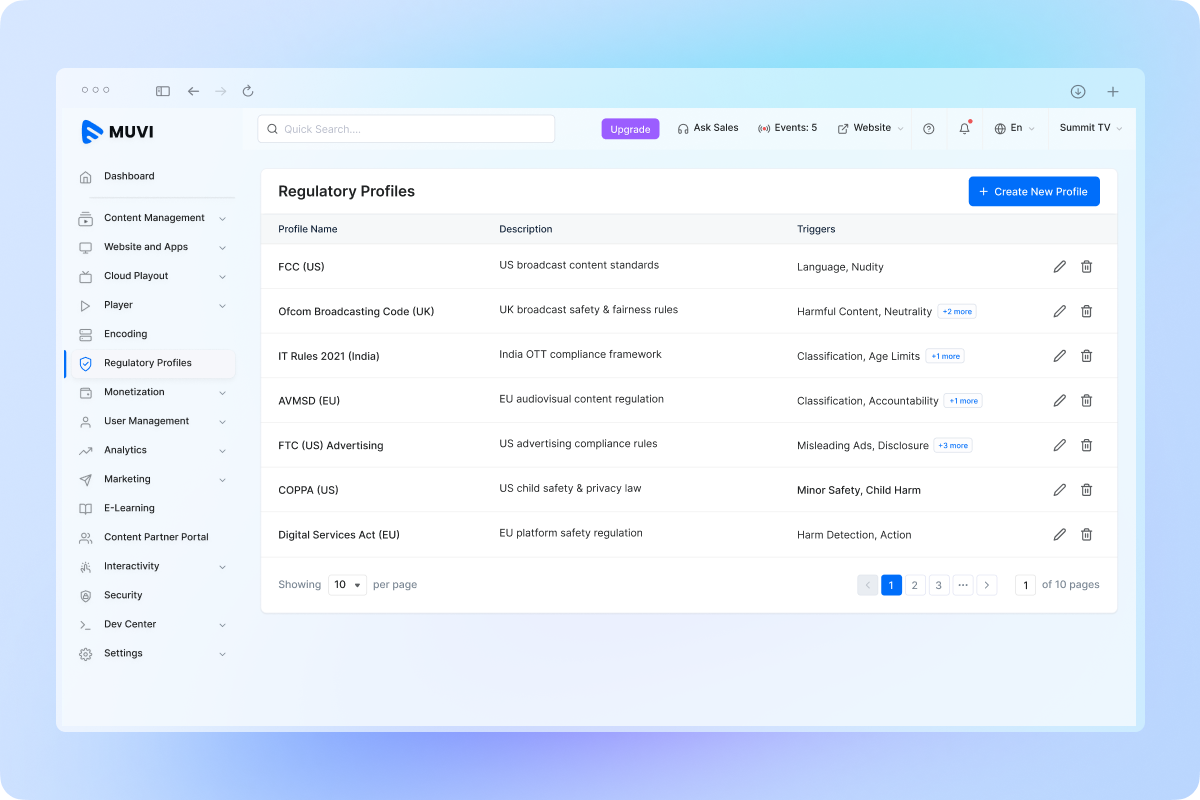

You Establish the Rules. TrueComply Enforces Them, Flawlessly.

Go Beyond OSA. Stay Compliant Across the Ecosystem

Built for broadcasters and platforms with large libraries. TrueComply is an AI-powered OSA compliance tool that can reduce review time, ensure consistent compliance, and help avoid costly violations.

Make Your Videos Compliant Ready

Automate detection and correction

Reduce review time and operational overhead

Deliver broadcast-ready content every time