Be it vlogging, watching sports tournaments, or business product launches, Live Video has been gaining a lot of traction over the years. However, it’s hard to deliver an actual live streaming experience in real-time. Delivering an low latency streaming experience becomes challenging when a video is distributed using online video platform: the most obvious example is hearing your neighbors cheer before you during a live soccer tournament! In the next 5 minutes, you will get a detailed elaboration of the importance of low latency live streaming and how it can be used to provide a better viewing experience.

What is Latency?

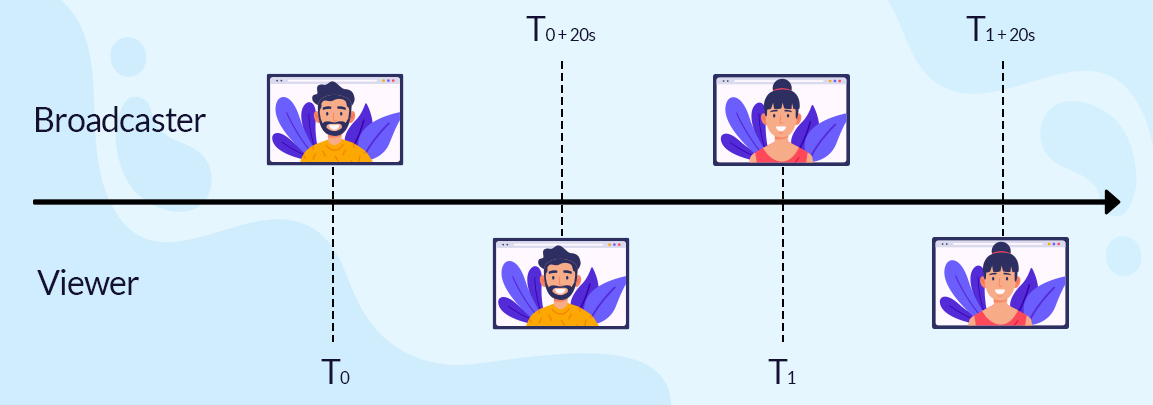

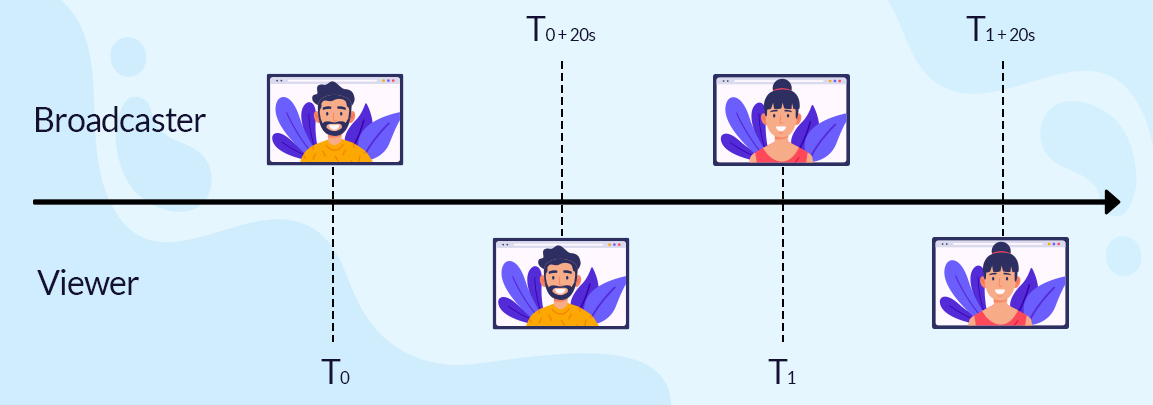

Latency generally means ‘delay’ or ‘lag’ in video streaming. Within a streaming environment, latency is defined by measuring the time when something is recorded on camera in real life and when it actually gets reflected on the screen. For example, your friend waves at you in front of the camera and you watch it move on the screen, say, 10 seconds later. Therefore, latency is the time taken for an action to appear on screen from Point A (the camera) to Point B (the screen).

So, if you’re streaming live video, due to latency, your viewers will never be able to see you in real-time — there will always be a lag.

Factors causing Latency?

An end-to-end live streaming pipeline is complex, consisting of a range of components including encoding, transcoding, delivery, and playback. Each adds to a layer of latency. Depending on the different components and the architecture of the pipeline, latency can be impacted significantly. So, let’s look at the factors contributing to latency in a live stream:

1. Encoding & packaging: The latency introduced is very sensitive to the kind of encoding or compression techniques that are being followed. There are several encoding compression standards, and therefore, the process needs to be optimized with as little delay as possible.

2. CDN: Delivering live video at scale requires most streaming service providers to leverage content delivery networks or CDNs. As a result, the video needs to propagate between different caches, introducing an additional layer of latency.

3. Last-mile delivery: Latency also depends on the end-user’s network connections, for example, whether they are using a cabled network at home, being connected to a wifi hotspot, or using their mobile connection. Also, if their geographical locations are far away to the closest CDN endpoint, additional latency could be experienced.

4. Video Player Buffer: Online video players need to buffer video to ensure a smooth playback experience. Often the sizes of buffers are defined in media specifications, but here you must note that more the size of the buffer, more the latency in playback.

For more information on live streaming latency, read Live Streaming Latency: A Glass to Glass Analysis [Whitepaper]

You see, ‘live’ is not actually live- you are bound to witness latency. So, if several seconds of latency is normal in live streaming, what exactly is low latency?

Well here’s what- there are no standards that govern the principles of “high” and “low” latency. It’s subjective. The popular HLS streaming protocol defaults to 30–45 seconds of latency. So, low latency would be trying to reduce the 30-45 seconds lag to 5-7 seconds: similar to the standards in traditional broadcast viewing. That’s what is considered “low latency” in live streaming. So, low latency is latency which is lower when compared with the average in that field of broadcasting.

Where is Low Latency Required?

Of course, nobody desires high latencies, but there are contexts when low latencies become especially important. For most streaming use-cases, the typical 30 to 45-second lag isn’t problematic. But for some streaming contexts, Low Latency Video Live Streaming emerges as business-critical consideration.

1. Video chat: From video conversations in Zoom to news reporting, delivering ultra-low latency becomes crucial for delivering the correct message in ‘real-time’. Unwanted lags in video conferences bring about unnecessary confusion resulting in miscommunication or total communication breakdowns. Fast transmission of data and low latency becomes indispensable so that both parties can have a seamless and uninterrupted conversation.

2. Second-screen use cases: If you are watching a live soccer game on a second-screen app, it’s needless to say that the app will lag several seconds behind the live broadcast, ultimately killing your user experience. In this case, the second-screen app needs to at least match the latency of the linear TV to deliver a consistent viewing experience.

3. Online video games: There’s no point in playing video games if there’s latency involved. Online video games must reflect the action in real-time on the player’s computer screen. Delays between the action and its reflection on the screen seriously compromise the total gaming experience.

4. Sports betting and online casinos: Activities such as auctions and sports-track betting are usually fast-paced. Low latency enables the players to gamble and place bets in real-time, or as close to it as possible so that everybody is on the same page.

Streaming Protocols & their Role in Delivering Low Latency Streams

Streaming protocols play an indispensable role in delivering low latency streams as they ensure that your encoder and the streaming service are on the same page when it comes to live streaming.

RTMP

RTMP or Real-Time Messaging Protocol was Macromedia’s befitting answer to deliver low latency streaming. While RTMP delivers high-quality and speedy streams efficiently, it requires a Flash-based player that is not supported on iOS devices. That’s the reason why more people are moving away from it and implementing alternatives.

WebRTC

A relatively new and advanced protocol increasingly becoming a popular choice is WebRTC where RTC stands for Real-Time Communication. Designed to work natively in a web browser, WebRTC ousts the requirement for a plug-in architecture like Flash.

The streaming protocol is perfect for real-time data transfer and video conferencing. However, a complex server set up is required to deploy WebRTC.

HLS & MPEG-DASH

HLS and MPEG-DASH are alternatives to WebRTC and can significantly reduce latencies down to 5-7 seconds by simply constructing small streamable video segments after processing the raw video material. The video parts are packaged into a shipping container better known as CMAF (also called Chunked CMAF) before being sent to the end-user. When these chunks are streamed using HTTP/1.1 chunked transport, latency can be reduced even further to as low as 3-7 seconds. The smaller the video segments, the lower is the latency.

Wrapping up,

If you want streaming technology that provides high responsiveness, consider advanced low latency live streaming features. With Muvi Live, you can stream high-quality video with 10 seconds latency or less. With adaptive multi-bitrate streaming, your users unlock a lag-free viewing experience that is auto-scalable during peak hours.

Sign up for a 14-day Free Trial to start delivering lightning-fast video streams!

Add your comment